Abstract:

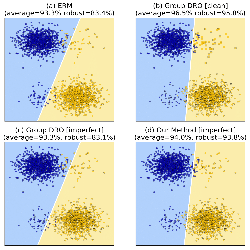

Contrastive learning has brought important advances in improving anomaly detection. Yet these techniques rely on clean training data, which cannot be guaranteed in real-world scenarios. This paper presents a theoretical interpretation of when and how contrastive learning alone fails to detect anomalies under data contamination. To address the shortcomings, we propose Elsa, a novel semi-supervised anomaly detection approach, that unifies the concept of energy-based models with unsupervised contrastive learning. Elsa instills robustness against various practical scenarios by a carefully designed fine-tuning step that uses the energy function to divide the normal data into prototype classes or subclasses that reflect heterogeneity of the data distribution. By using a small set of anomaly labels, Elsa improves anomaly detection performance in both clean and contaminated data scenarios by 0.9 and 6.6 AUROC, respectively.