Abstract:

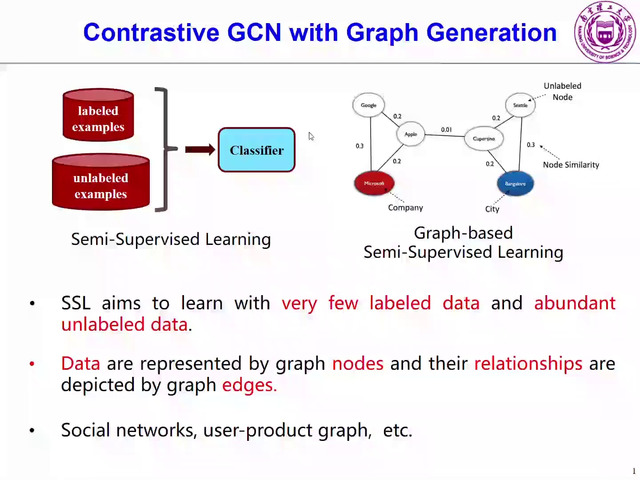

In this paper, we propose an end-to-end graph learning framework, namely \textbf{I}terative \textbf{D}eep \textbf{G}raph \textbf{L}earning (\alg), for jointly and iteratively learning graph structure and graph embedding. The key rationale of \alg is to learn a better graph structure based on better node embeddings, and vice versa (i.e., better node embeddings based on a better graph structure). Our iterative method dynamically stops when the learned graph structure approaches close enough to the graph optimized for the downstream prediction task. In addition, we cast the graph learning problem as a similarity metric learning problem and leverage adaptive graph regularization for controlling the quality of the learned graph. Finally, combining the anchor-based approximation technique, we further propose a scalable version of \alg, namely \salg, which significantly reduces the time and space complexity of \alg without compromising the performance. Our extensive experiments on nine benchmarks show that our proposed \alg models can consistently outperform or match the state-of-the-art baselines. Furthermore, \alg can be more robust to adversarial graphs and cope with both transductive and inductive learning.