Abstract:

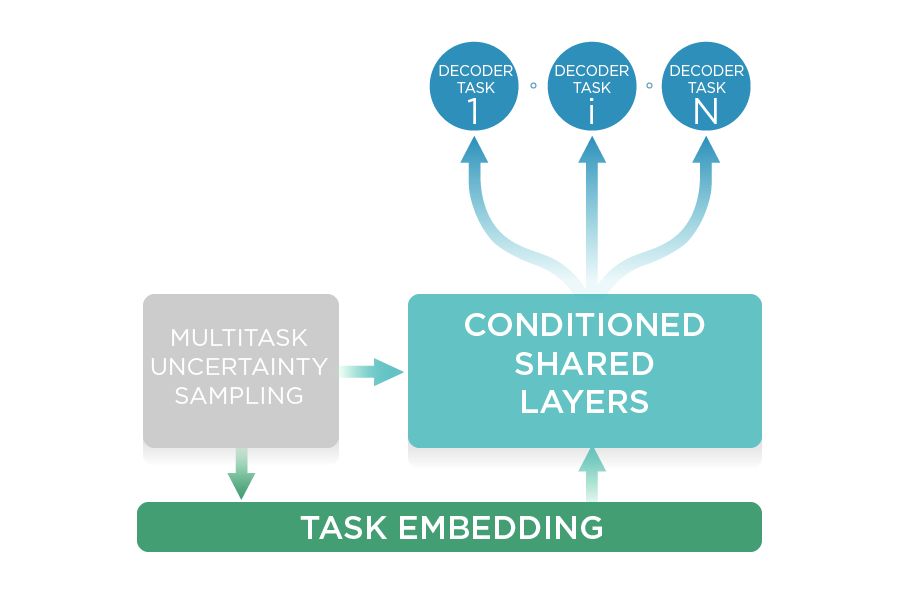

In recent years, researchers have shown an increased interest in recognizing the overlapping entities that have nested structures. However, most existing models ignore the semantic correlation between words under different entity types. Considering words in sentence play different roles under different entity types, we argue that the correlation intensities of pairwise words in sentence for each entity type should be considered. In this paper, we treat named entity recognition as a multi-class classification of word pairs and design a simple neural model to handle this issue. Our model applies a supervised multi-head self-attention mechanism, where each head corresponds to one entity type, to construct the word-level correlations for each type. Our model can flexibly predict the span type by the correlation intensities of its head and tail under the corresponding type. In addition, we fuse entity boundary detection and entity classification by a multitask learning framework, which can capture the dependencies between these two tasks. To verify the performance of our model, we conduct extensive experiments on both nested and flat datasets. The experimental results show that our model can outperform the previous state-of-the-art methods on multiple tasks without any extra NLP tools or human annotations.