Abstract:

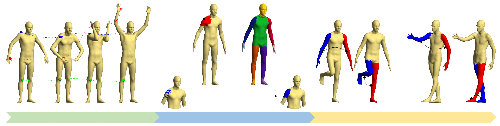

We present a method for decorating existing 3D shape collections by learning a texture generator from internet photo collections. We condition the StyleGAN texture generation by injecting multiview silhouettes of a 3D shape with SPADE-IN. To bridge the inherent domain gap between the multiview silhouettes from the shape collection and the distribution of silhouettes in the photo collection, we employ a mixture of silhouettes from both collections for training. Furthermore, we do not assume each exemplar in the photo collection is viewed from more than one vantage point, and leverage multiview discriminators to promote semantic view-consistency over the generated textures. We validate and demonstrate the efficacy of our design on three real-world 3D shape collections.