Abstract:

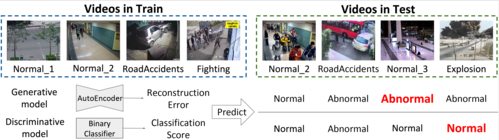

In this paper, we propose a new framework for an anti-litter visual surveillance system to prevent garbage dumping as a real-world application. There have been many efforts to deploy an action recognition based visual surveillance system. However, many conventional methods were overfitted for only specific scenes due to hand-crafted rules and lack of real-world data. To overcome this problem, we propose a novel algorithm that handles the diverse scene properties of the real-world surveillance. In addition to collecting data from the real-world, we train the effective model to understand the person through multiple datasets such as human poses, human coarse action (e.g., upright, bent), and fine action (e.g., pushing a cart) via multi-task learning. As a result, our approach eliminates the need for scene-by-scene tuning and provides robustness to behavior understanding performance in a visual surveillance system. In addition, we propose a new object detection network that is optimized for detecting carryable objects and a person. The proposed detection network reduces the computational cost by specifying potential suspects only to the person who carries an object. Our method outperforms the state-of-the-art methods in detecting the garbage dumping action on real‐world surveillance video dataset.