Abstract:

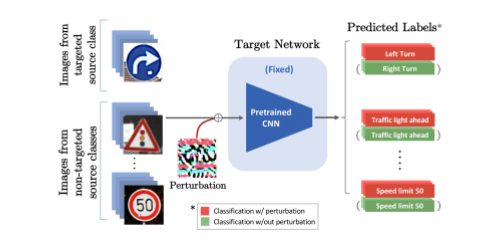

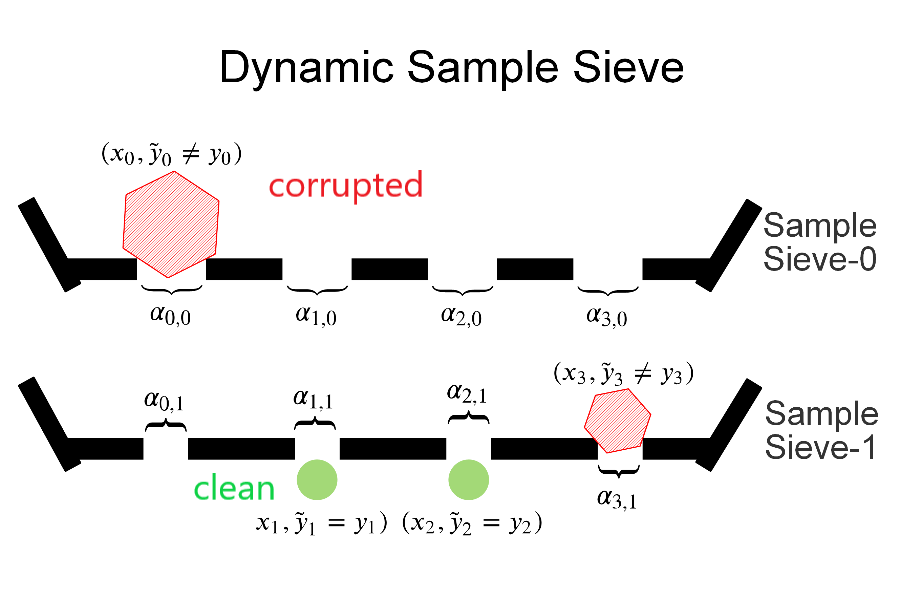

Despite the remarkable performance of deep neural networks on various computer vision tasks, they are known to be highly susceptible to adversarial perturbations, which makes it challenging to deploy them in real-world safety-critical applications. In this paper, we conjecture that the leading cause of this adversarial vulnerability is the distortion in the latent feature space, and provide methods to suppress them effectively. Explicitly, we define \textbf{vulnerability} for each latent feature and then propose a new loss for adversarial learning, \textbf{Vulnerability Suppression (VS)} loss, that aims to minimize the feature-level vulnerability during training. We further propose a Bayesian framework to prune features with high vulnerability to reduce both vulnerability and loss on adversarial samples. We validate our \textbf{Adversarial Neural Pruning (ANP)} method on multiple benchmark datasets, on which it not only obtains state-of-the-art adversarial robustness but also improves the performance on clean examples, using only a fraction of the parameters used by the full network. Further qualitative analysis suggests that the improvements come from the suppression of feature-level vulnerability.