Abstract:

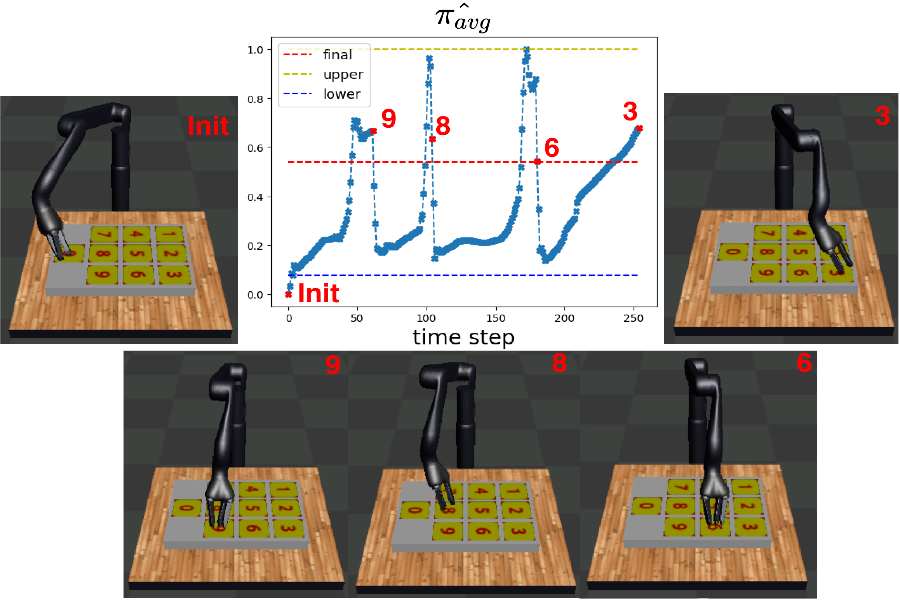

We present new planning and learning algorithms for use with the RAE (Refinement Acting Engine) acting procedure (Ghallab et al., 2016). RAE uses hierarchical operational models to perform tasks in dynamically changing environments. Our planning algorithm, UPOM, does a UCT-like search in the space of operational models in order to tell RAE which operational model to use for each task. Our learning strategies acquire, from online acting experiences and/or simulated planning results, a mapping from decision contexts to method instances as well as a heuristic function to guide UPOM. Our experimental results show that UPOM and our learning strategies significantly improve RAE’s performance in four test domains using two different metrics: efficiency and success ratio.